Developer and Agent eXperience

Where developers and AI agents work together

Secure agentic pair programming with a continuous feedback loop—fully customizable to your workflow.

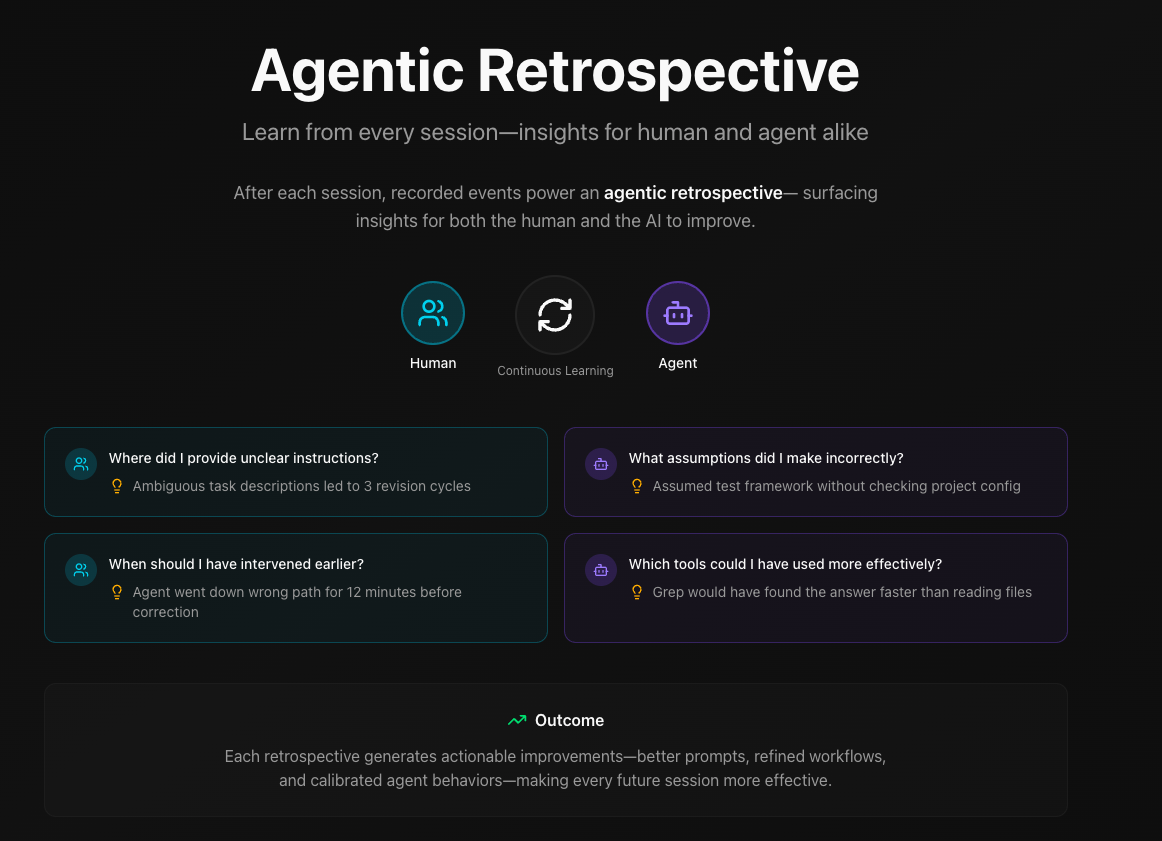

Evidence-based retrospectives for human-agent collaboration. A feedback loop where both humans and agents learn from each session.

The Problem

Developers are overwhelmed. The AI hype cycle is relentless—new tools, frameworks, and "game-changing" agents every week. What's real? What's noise? Even Andrej Karpathy admits he's "never felt this much behind as a programmer." How do you embrace agentic tools without drowning in slop or shipping code you don't understand?

AI agents are struggling too. Context engineering, memory limits, model selection, token costs, data privacy—getting agents to work well is its own skill. And the behavior is unpredictable: over-eager agents make sweeping changes without asking, while helpless agents stall without constant hand-holding. Without good test loops and feedback mechanisms, the output is garbage.

And security can't be an afterthought. Agents need sandboxed execution, proper identity, and auditable actions. Autonomy without guardrails is liability.

What We're Building

Unified Context

Agents and developers share the same view of the codebase, project goals, and documentation.

Continuous Feedback Loop

Every session is recorded. Humans and agents learn together—what worked, what didn't, what's next.

Composable Tools

Mix and match the best models, agents, and workflows without lock-in.

Secure by Design

Capability-based security, audit trails, and fine-grained permissions for agent actions.

Our Approach

- Developer-first. We build tools we want to use. If it doesn't make your workflow better, we won't ship it.

- Open standards. We use MCP, LSP, devcontainers, and open protocols. Your tools should work together, not against each other.

- Incremental adoption. Start with what you have. No rip-and-replace required.

- Transparent development. We share our thinking, research, and progress openly.

Learn more about our vision and what we're building.

Read: Introducing daax.dev