Sprint retrospectives were designed for a world where humans wrote all the code. That world is gone.

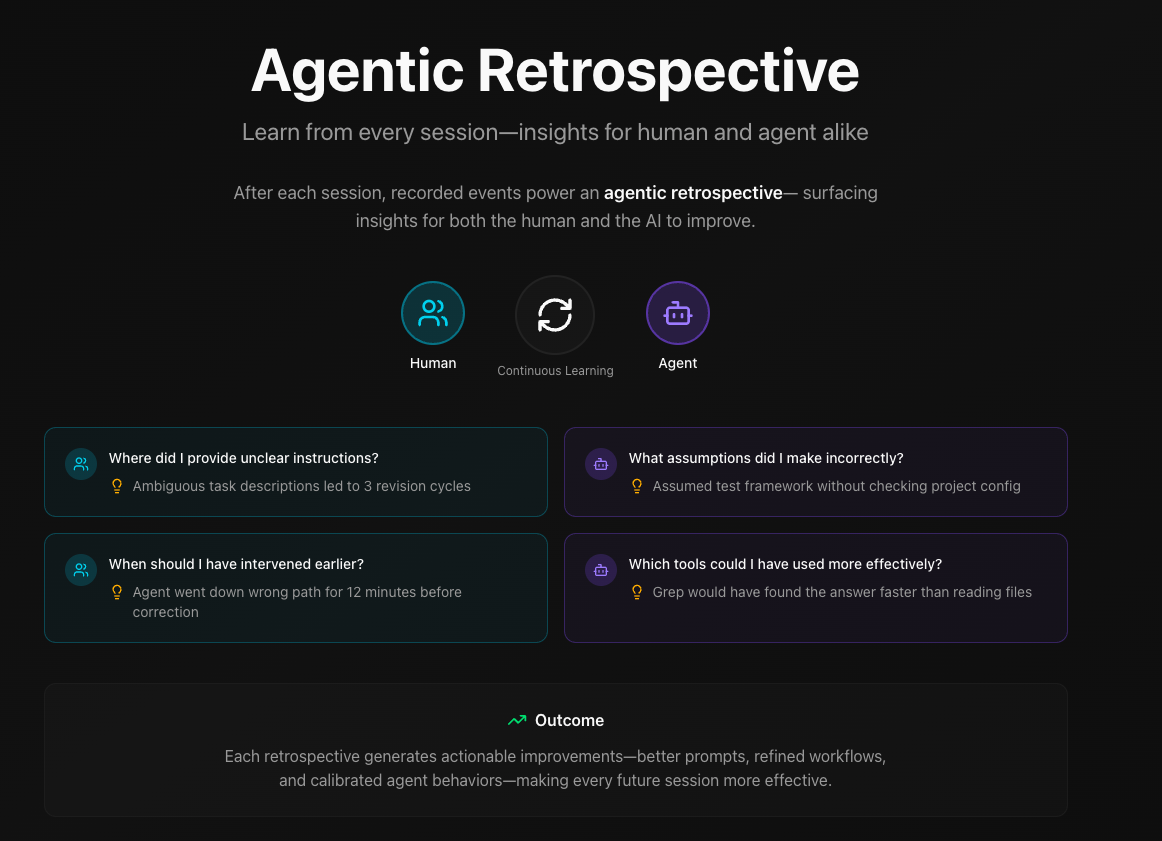

AI agents now make commits, open PRs, and ship architectural decisions. This opens up a new possibility: a feedback loop where both humans and agents learn from each session and get better over time. Not just the human improving their prompts, but the agent starting each session with accumulated context about what works for this codebase, this team, this workflow.

That's what we're building toward.

The Pace of Change

Consider what's happened in just sixteen months:

| Date | Milestone |

|---|---|

| Nov 2024 | MCP announced—Model Context Protocol |

| Feb 2025 | Claude Code launches—agentic CLI |

| Mar 2025 | MCP spec 2025-03-26—enhanced security |

| Jun 2025 | Hooks released—event-driven automation |

| Jun 2025 | Claude Flow—swarm orchestration (ruvnet) |

| Jul 2025 | Subagents—parallel task execution |

| Aug 2025 | AGENTS.md released—project instructions for agents |

| Sep 2025 | Claude Code 2.0 + Agent SDK—Sonnet 4.5 launches |

| Oct 2025 | Skills system—reusable agent capabilities |

| Oct 2025 | Beads—agent memory system (Steve Yegge) |

| Nov 2025 | Claude Opus 4.5—flagship model upgrade |

| Dec 2025 | Agentic AI Foundation—MCP + AGENTS.md to Linux Foundation |

| Dec 2025 | Agent Skills open standard—cross-platform skill format |

| Jan 2026 | Gas Town—multi-agent orchestration (Steve Yegge) |

| Feb 2026 | Opus 4.6 + Agent Teams—multi-agent orchestration |

New capabilities are shipping faster than teams can evaluate what works. By the time you've figured out your MCP server setup, there's a new paradigm to consider.

This isn't slowing down. The toolchain will look different in six months. And six months after that.

What to measure? and why?

The point isn't metrics for metrics' sake. It's the ability to objectively evaluate and continuously improve in a rapidly changing environment.

When a retrospective surfaces that 75% of PRs got superseded before merging, that's data. Maybe the initial specs could be clearer. Maybe adding a checkpoint before implementation would help. Maybe a new capability—like background agents for validation—would change the workflow entirely.

Without objective measurement, you can't tell what's actually working. You're guessing. And when the tools change every few weeks, guessing compounds into confusion.

With measurement, every sprint builds on the last. The collaboration gets tighter. The output gets better. When new capabilities ship, you have a baseline to compare against.

The Feedback Loop

Here's how it works (thus far):

- Measure what happened — Commits, PRs, decisions, rework patterns

- Surface what worked and what didn't — Signal, not blame

- Feed learnings back into the process — Update CLAUDE.md, adjust prompts, refine workflows

- Agent adapts — Next session starts with better context and clearer boundaries

The retrospective report shows metrics like:

| Metric | What It Signals |

|---|---|

| 75% PR supersession | Opportunity to evaluate spec clarity or add checkpoints |

| 2% testing discipline | Room to build validation into the workflow |

| 11.8% agent commits | Quantified contribution for capacity planning |

| 18h time-to-fix | Feedback loop can be tightened |

The metrics are the trigger. The value is in the conversation they start and the changes that follow.

Closing the Loop

When a retrospective reveals patterns—say, architectural decisions being made without confirmation—that insight goes somewhere useful:

Into CLAUDE.md: "Always confirm one-way-door decisions before implementing"

Into prompts: Clearer boundaries on agent autonomy

Into workflow: Checkpoints before certain changes

The agent reads CLAUDE.md at the start of each session. The learnings compound. What worked gets reinforced. What needs adjustment gets adjusted.

This is the difference between "AI-assisted development" and genuine human-agent collaboration. One is a person using a tool. The other is a partnership where both sides adapt based on shared experience.

What Gets Measured

Rework chains — Fix commits following features within 48 hours. An opportunity to objectively evaluate what would help: clearer specs, more testing, better context, or maybe a different workflow pattern entirely.

Decision quality — Do logged decisions include rationale and context? This reveals whether trade-offs are being captured for future reference—useful when you need to revisit a choice after the toolchain changes.

Code hotspots — Files changed 3+ times in a sprint. Core infrastructure that legitimately needs attention, or a design worth revisiting?

Agent attribution — What percentage of commits came from AI? Useful for understanding the collaboration mix and planning capacity as agent capabilities evolve.

The tool handles missing data gracefully. No decision logs? Notes the gap, continues. The goal isn't perfect telemetry—it's knowing what exists, where the blind spots are, and what to instrument next.

Request for Testers

This tool reflects how we work. Other teams use different agents, different workflows, different definitions of quality. The metrics that matter to you might not be the ones we built first.

Try it now:

npx @daax-dev/retrospectiveTakes 30 seconds. No installation. View the source on GitHub.

Tell us:

- What works and what's confusing

- What metrics would help your retrospectives

- What's missing from this feedback loop

- How you'd want this to integrate with your existing workflow

Get Involved

This is open source. We'll share what we're learning, incorporate ideas that make this better, and build alongside people who believe human-agent collaboration should keep improving—even as the tools underneath keep changing.

View on GitHubThat's the goal. Help us get there.