Docker is building microVM sandboxes. When the company that made containers mainstream decides containers aren't enough for AI workloads, that's not a product announcement. That's an admission. The security community has said it for years: containers were never a security boundary.

That distinction didn't matter much when humans wrote and ran the code. You trusted it because you wrote it, or someone on your team did. AI agents change the equation. When an LLM generates code and immediately executes it—installing packages, making network calls, writing to disk—the question stops being "will this code work?" and becomes "what else might this code do?"

The answer from the industry: give AI its own computer. A real one. With its own kernel.

That's what a microVM is. And suddenly everyone is building them.

Containers — What They Solve

Containers solve packaging and deployment. Docker didn't get millions of developers by accident. But containers share the host operating system's kernel. Every container on your machine talks to the same kernel. If a process inside a container finds a kernel vulnerability, it can escape. Game over.

For normal workloads, this risk is manageable. You control the code. You vet dependencies. You patch kernels. The attack surface exists, but you manage it with trust.

AI code execution blows that trust model apart. An AI agent might:

- Install arbitrary npm packages (some of which are malicious)

- Execute hallucinated code with unintended side effects

- Make network calls to unexpected endpoints

- Write files in places you didn't anticipate

You're not running your code anymore. You're running code generated seconds ago by a statistical model. Nobody reviewed it. Nobody approved the dependencies.

Containers give you namespace isolation—separate views of the filesystem and network. MicroVMs give you hardware-enforced separation with a dedicated kernel. One is a curtain. The other is a wall.

Apple just told you which one they believe in. When they open-sourced their container runtime in 2025, they didn't build another Docker. Every single container runs inside its own lightweight Linux VM, powered by Apple's Virtualization.framework—a dedicated kernel per container, not a shared one. That's not a performance trade-off they stumbled into. It's a security architecture they chose on purpose. Each VM only mounts the data it actually needs, eliminating the shared-VM pattern where the host pre-exposes everything just in case. They still consume standard OCI images, so compatibility isn't the sacrifice. Trust is the point. When the company that builds the most locked-down consumer operating system on earth designs a container runtime from scratch, and the first architectural decision is "every container gets its own kernel"—that's not an opinion about containers. That's a verdict.

The Landscape: Everyone Showed Up

Eighteen months ago, the AI sandbox space barely existed. Now count the ones I've come across—there might be more that I've missed.

Purpose-Built AI Sandboxes

| Platform | Isolation | Cold Start | What Stands Out |

|---|---|---|---|

| E2B | Firecracker microVMs | <200ms | Open-source (Apache-2.0), built for AI agents, Python/JS SDKs. ~$0.05/hr per vCPU |

| Daytona | Docker (Kata/Sysbox optional) | <90ms warm | Pivoted from dev environments to AI execution in late 2024. 50k+ GitHub stars |

| Modal | gVisor containers | Fast | Python-native, real GPU support (consumer to datacenter), SOC 2 Type 2 |

| Blaxel | Firecracker microVMs | 25ms resume | "Perpetual sandboxes"—state preserved indefinitely. YC-backed, $7.3M seed |

| Together AI | VM snapshots | 500ms hot | Hot-swappable VMs up to 64 vCPU/128GB RAM, Git-versioned storage |

| Microsandbox | libkrun microVMs | — | Self-hosted, open-source, Rust-built, MCP server native. Experimental |

The Hyperscalers

| Platform | Isolation | What They Bring |

|---|---|---|

| AWS Bedrock AgentCore | Firecracker microVMs | Each agent session gets its own microVM (up to 8hrs). Cloned VMs share clean memory pages, copy-on-write for isolation. The same Firecracker that powers Lambda and Fargate—now pointed at agents |

| Azure Container Apps Dynamic Sessions | Hyper-V sandboxes | Ephemeral sandboxes for LLM-generated code execution. Hyper-V isolation (not namespace—hypervisor). Serverless GPU sessions in early access. 220s max execution per call |

| Google Agent Sandbox | gVisor on GKE | Each pod gets its own gVisor kernel, network, and filesystem. Pod Snapshots for instant provisioning. Open-source via Kubernetes SIG Apps (CNCF). Native Kubernetes integration |

The pattern is loud. AWS gave agents Firecracker—the same isolation that runs Lambda for millions of customers. Azure wrapped Hyper-V—the hypervisor that runs their entire cloud—into ephemeral sandboxes you can spin up from a Container Apps API call. Google built gVisor to protect Search and Gmail, now it's the kernel boundary for agent code on GKE. None of them reached for containers. Every one of them reached for their strongest isolation primitive and pointed it at AI.

Broader Platforms Adding Sandbox Capabilities

| Platform | Isolation | What They Bring |

|---|---|---|

| Fly.io | Firecracker | <1s boot, REST API, global Anycast network (18 regions) |

| Vercel | Firecracker microVMs | AI agent code execution. GA (Jan 2026), 45min–5hr session limits |

| Cloudflare | Containers (orchestrated via Workers) | Full Linux sandbox SDK at 330+ edge locations. Workers layer has 0ms cold starts (V8 isolates); container sandboxes have real cold starts |

| Edera | Krata (custom Xen) | Strongest isolation—dedicated kernel per container. $15M Series A ($20M total). GPU isolation for AI workloads |

| Northflank | Multiple backends | Full PaaS, 2M+ isolated workloads/month. Running since 2019 |

| SlicerVM | Firecracker / Cloud Hypervisor | Self-hosted. "Firecracker for humans." ~300ms boot, REST API + Go SDK, ZFS snapshots, GPU passthrough (VFIO) |

Six purpose-built AI sandbox platforms. Seven infrastructure providers adding sandbox capabilities. A year ago, most of these either didn't exist or weren't targeting AI workloads.

That's not a feature. That's a category.

Where the Tech Comes From

Almost none of the underlying isolation technology was built for AI. The hyperscalers built it to solve their own multi-tenancy problems. Running millions of untrusted workloads on shared hardware, they needed guarantees that customers couldn't touch each other. The AI sandbox companies are standing on those shoulders.

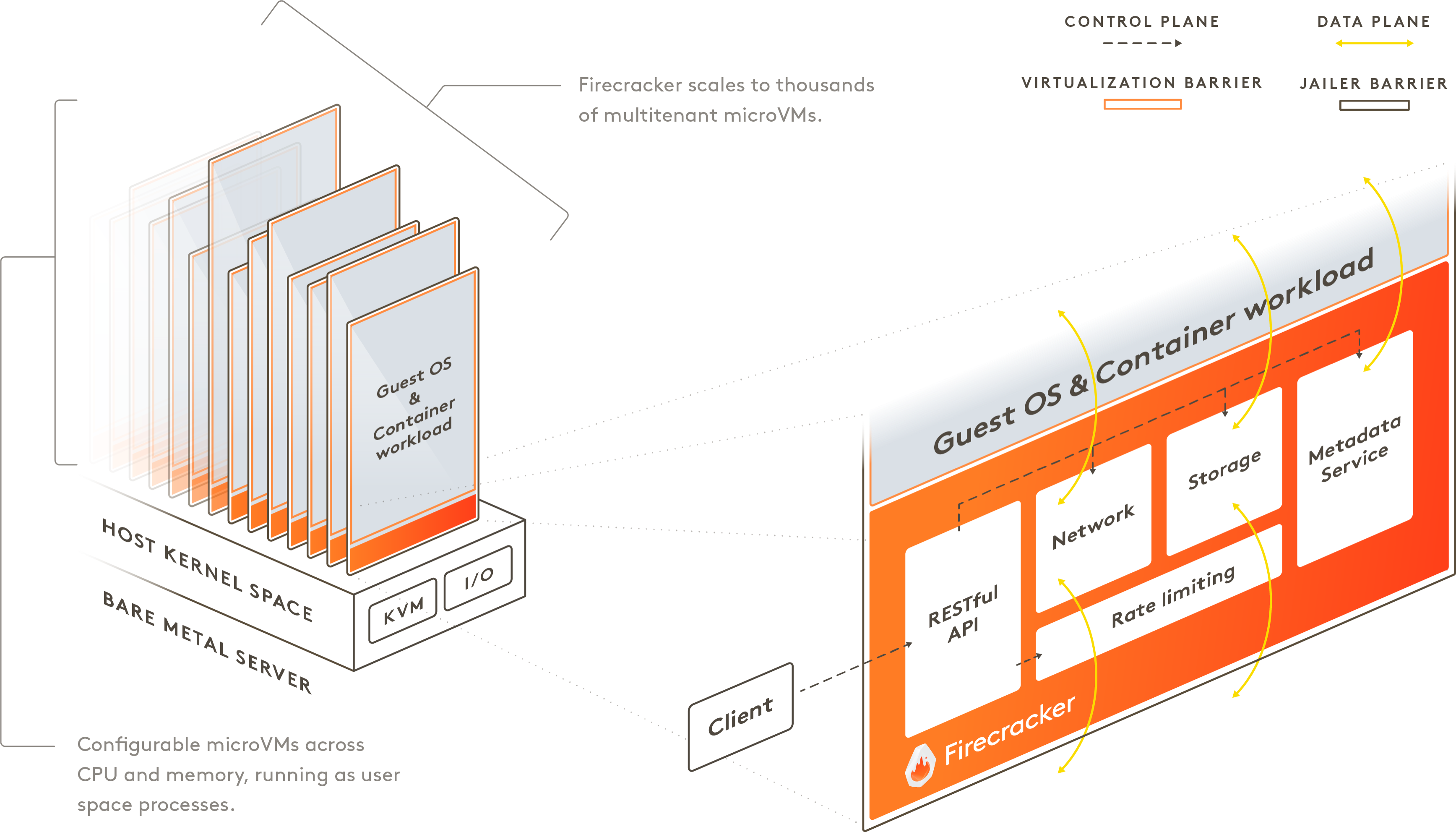

Firecracker is the most common foundation. Amazon built it for Lambda and Fargate. It boots a microVM in ~125ms with ~5MB memory overhead. Open-sourced in 2018. E2B, Blaxel, Fly.io, Vercel, and Northflank all use it. When you see "microVM" in an AI sandbox pitch, it's probably Firecracker underneath.

SlicerVM is one that I've used a bit and really like. It's an interesting case study in where Firecracker is headed. Their pitch is "Firecracker for humans"—install it on bare-metal Ubuntu, hit a REST API, get a microVM in ~300ms. ZFS snapshots for instant disk cloning. PCI passthrough for GPUs and TPUs. No Kubernetes. No orchestration layer you didn't ask for. It's the closest thing to "I want Firecracker without needing to be an AWS engineer." For teams that want to self-host their own sandbox infrastructure without adopting a full platform, that's a real option. The fact that someone can build a viable product just by making Firecracker accessible tells you how foundational that technology has become.

gVisor is Google's approach—a user-space kernel that intercepts system calls. Your application thinks it's talking to Linux, but it's talking to gVisor, which decides what to allow. Modal and Google's Agent Sandbox run on it. Less overhead than a full VM, more isolation than a container. A middle path.

Kata Containers came from the OpenStack community, merging Intel's Clear Containers and Hyper's runV. OCI-compatible, Kubernetes-native. The enterprise answer.

libkrun is the newcomer. Library-based KVM microVMs you can embed directly into applications. Microsandbox uses it. So does Podman. Think of it as "add microVM isolation to your app like adding a library."

Krata is Edera's custom job—a Xen-based hypervisor with a Rust control plane, giving each container its own dedicated kernel. Maximum isolation, maximum overhead. When your threat model assumes the kernel itself is compromised, this is what you reach for. It's the bunker option.

V8 Isolates are Cloudflare's play. Same isolation Chrome uses between browser tabs, running server-side. Zero millisecond cold starts. Not a VM. Not a container. A JavaScript isolation boundary running at 330+ edge locations. Limited to what V8 can run, but nothing touches it on speed and cost.

The pattern: Amazon, Google, Intel, and the Xen project solved hard isolation problems for their own infrastructure and open-sourced the results. Now a wave of startups is repurposing that technology for AI agents. The sandbox companies are, in most cases, the developer experience layer on top of hyperscaler plumbing.

How to Think About Choosing

If you're evaluating this space, a few questions cut through the noise.

What's your threat model?

- AI agent running your own vetted code → containers are probably fine

- AI agent running generated code with network access → microVM minimum

- Multi-tenant AI platform serving external users → dedicated kernel (Edera-level) or hypervisor isolation

What's your latency budget?

- Sub-100ms → Blaxel (25ms resume), Daytona (<90ms), Cloudflare Workers (0ms for V8)

- Sub-second → E2B (<200ms), Together AI (500ms hot start), Fly.io (<1s)

- Seconds are fine → most options work, optimize for features

Do you need GPUs?

- Yes → Modal, Beam, Together AI. GPU passthrough in microVMs is still maturing elsewhere. Edera's doing GPU isolation specifically for AI if you need the security story.

Self-hosted requirement?

- SlicerVM (run-anywhere, bare-metal), Microsandbox (libkrun, open-source), E2B (Apache-2.0), Beam/beta9 (AGPL-3.0)

Already on a major platform?

- GCP/Kubernetes → Google Agent Sandbox

- Vercel ecosystem → Vercel Sandbox

- Cloudflare → Sandbox SDK

Pick based on your actual constraints, not the marketing. Most of these platforms are less than two years old. The ones with open-source foundations and standard interfaces give you the most room to maneuver when the market consolidates—and it will.

It's Not Just AI Code Execution

AI agents writing and running code is the headline, but it's not the only reason sandboxes are exploding. Cloud providers have used isolation for multi-tenancy since forever—that's literally where Firecracker and gVisor came from. What changed is the surface area.

Look at OpenClaw. The open-source AI agent that reads your email, browses the web, installs third-party skills, and sends messages on your behalf. Researchers just found 341 malicious skills on ClawHub stealing user data. That's not a hypothetical threat model. That's a Wednesday. Any agent with access to private data, exposure to untrusted content, and the ability to communicate externally needs isolation that actually isolates. Security researchers called it the "lethal trifecta"—and OpenClaw has all three.

Security teams are spinning up sandboxes for malware analysis and threat research, where running code you don't trust is the whole point. Testcontainers—the developer testing library that spins up disposable databases and services for integration tests—is getting pulled toward stronger isolation as test environments grow more complex and CI pipelines run less trusted code. Even regulated industries that were running everything on-prem are looking at sandboxed execution for compliance-sensitive workloads.

The pattern is the same everywhere: more software running more autonomously means more situations where you can't vet what's executing. The sandbox isn't just an AI story. It's becoming the default answer to "what happens when you can't trust the code?"

What This Actually Means

Three things are happening at once.

The AI safety conversation just got infrastructure. When people talk about AI safety, they usually mean alignment—making models want the right things. Sandboxing is the other half: making sure it doesn't matter what the model wants, because the blast radius is contained. Both matter. Only one has shipping products you can deploy today.

The container era isn't ending, but its ceiling is visible. Containers solved deployment and packaging. They didn't solve trust. For AI workloads where nobody vetted the code before execution, the industry is moving to stronger isolation boundaries. Docker building a microVM isn't a side project. It's a signal.

This market is going to consolidate fast. Twelve-plus sandbox companies is a gold rush, not an equilibrium. Expect the cloud providers to copy or acquire the best primitives within 18 months. Two or three purpose-built platforms will win their niches. The rest become features inside larger platforms or quietly fold. If you're building on one of these today, bet on the open-source foundations. Portability is insurance.

Containers keep software organized. Sandboxes keep software contained. The distinction matters because the code your AI agent runs today wasn't written by your team, wasn't reviewed by your team, and might do things your team never intended. The walls need to be real.

There's a tell in how the major AI players are moving. OpenAI Codex and Claude Code both recently changed their installers to default to full system access. Desktop AI apps like Claude for Work are shipping with deep local integration—and the mere existence of these tools is already pressuring SaaS valuations. The direction is clear: AI isn't staying in the browser. It's moving onto your machine.

Running a microVM alone isn't enough. When everyone is building apps on AI-powered workstations that need higher trust, isolation is just the first layer. The VM gives you the wall. The policy around it is what makes the wall useful:

-

What filesystem paths can the sandbox see?

What filesystem paths can the sandbox see?

-

Is egress locked down, or can it call home to anywhere?

Is egress locked down, or can it call home to anywhere?

-

How do you pass short-lived credentials into a sandbox without leaving secrets on disk?

How do you pass short-lived credentials into a sandbox without leaving secrets on disk?

In 2026, sandbox isolation is becoming table stakes. The real question is what does your isolation strategy look like.